To bake in, or not to bake in, that is the question you must ask.

Keeping it simple…

Without a doubt, template creation and template sprawl are items people worry about when getting ready for their cloud environment. Maybe you have a Linux team that use PXE plus kickstart in the past and you’re having to convince them to move to templates, or maybe you had a bunch of templates with everything baked inside. When it comes to cloud, I’m personally a big believer in keeping your template as bare as possible, providing it doesn’t add a significant overhead to the build time.

This means stripping the template down to the base OS and layering everything on top.

The key also to remember here is that you want to try to be able to use a similar approach to your template management in your Public Cloud, as well as, your On-Premises Datacenter. This way you aren’t managing 2 completely separate processes and creating more complexity.

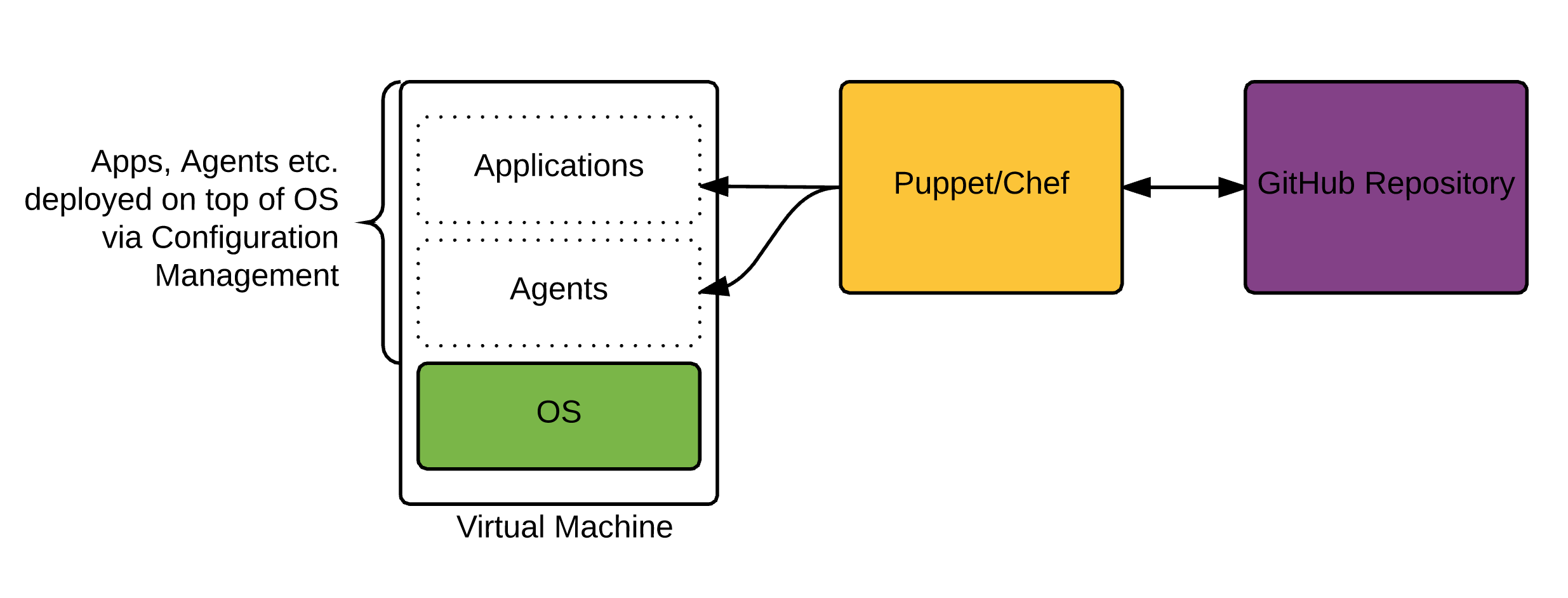

How do I configure things like agents, settings etc. after the template is cloned?

The rise of configuration management tools in the past few years has been key. Puppet and Chef in particular have become extremely widespread in the past few years. On top of that Microsoft is constantly evolving PowerShell DSC, and we have other newcomers like Saltstack and Ansible that are quickly gaining traction.

Essentially, everything you install and configure on top of the VM deployed, will be via Configuration Management. This allows us to completely abstract the configuration from the virtual machine. Think of it like a container (not to be confused with docker), that just hosts a specific configuration. The more you can modularize the pieces that sit on top of the OS, the easier it is to manage. This does however come at a cost of speed, because each application and potentially a number of reboots will need to be configured post VM provisioning.

How are Configuration Management Tools Changing?

I’m fortunate enough to work with some great Puppet engineers at Ahead who work on these pieces daily. Rather than retype what one of my colleagues at Ahead has already written, I suggest you check out this blog article on the Ahead Blog. http://blog.thinkahead.com/the-present-and-future-of-configuration-management

Creating the Template:

There are a number of ways to do this, and i’m sure the are more than the ones I list here:

- vSphere: Clone from an Existing VM and prep it.

- vSphere: Create a fresh template from an ISO.

- Amazon: Create a Windows AMI from a running instance. (Use a prebuilt Amazon AMI basically and customize it)

- Amazon: Import your vSphere VM and convert.

In the next post, we will be drilling down in detail on creating a fresh Windows vSphere Template for use in your Cloud. As we progress to Public Cloud, we will come back to the Amazon AMI options.