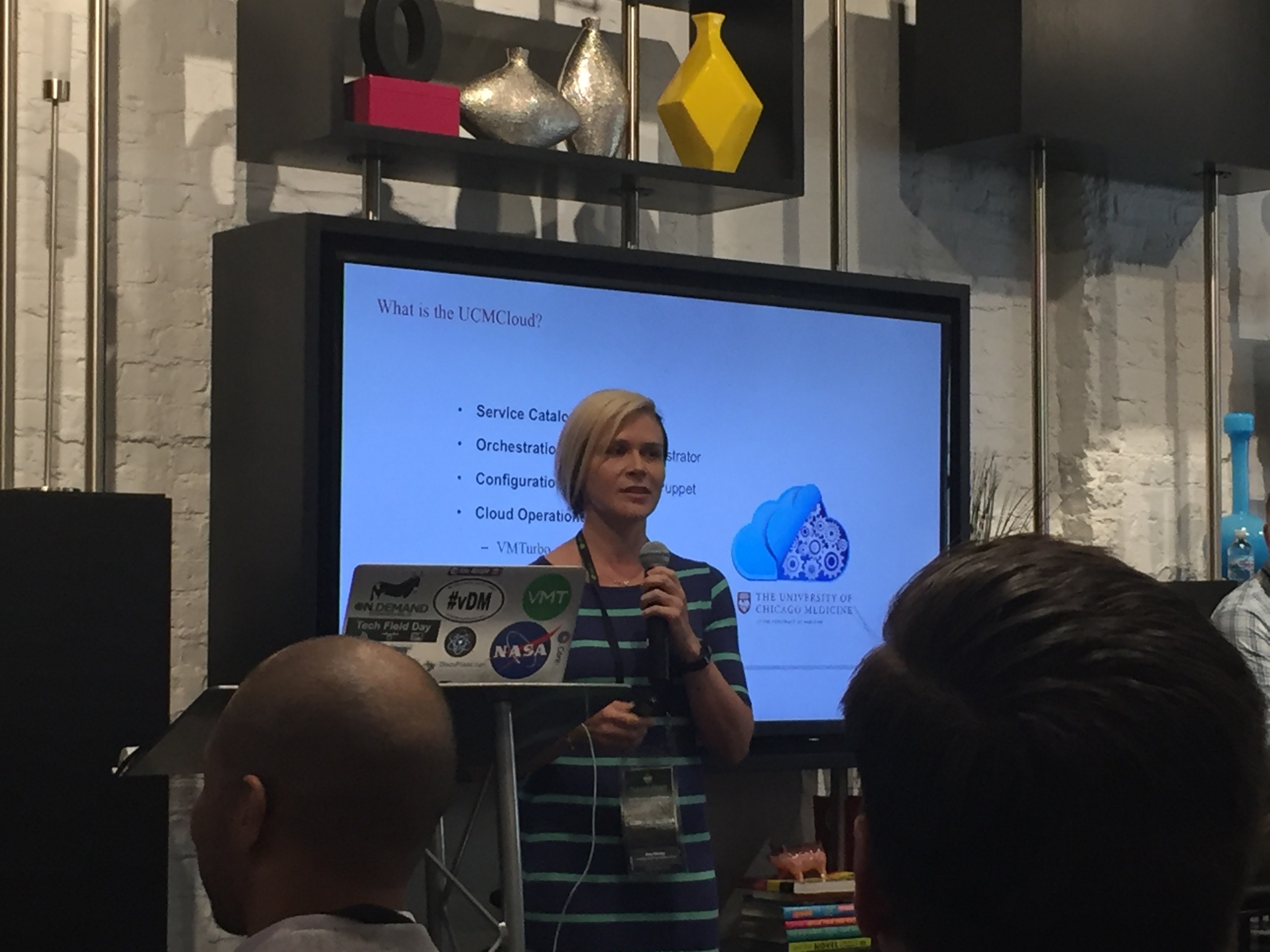

I woke up this morning, off an insane high of yesterday’s Ahead Tech Summit. I got to present to hundreds of people and more importantly alongside fantastic colleagues who I love working with every day. While the conference was going on, I also knew the vote was going on, and asked people not to tell me.

When I finally checked the news at around 5pm Chicago time, (11pm UK time), it said we were going to remain. Even though I felt in the days leading up to the referendum we should leave, I felt a sense of relief, and togetherness at the stay vote. It said something like 57% remain, 43% leave at the time.

I went out to party and felt like life would just go on as normal. Then waking up in the morning, I received some texts “wow, just wow”, only to find the result had changed and in fact it is a 52% leave, 48% remain. Suddenly shocked, I started to collect my thoughts and think about the vote again. We had actually decided as a country to leave. With that, we are entering new territory…changing directions after years of integrating more and more with the EU.

The Internet

Sadly, I looked at Twitter. I found people being hateful, Trump somehow thinking this compared to his campaign, and pure idiocracy. I won’t paste those tweets here because I think everyone is entitled to feel their own emotions and hopefully some of these viewpoints cool down soon.

I read my cousin’s post on Facebook which I did enjoy and I hope we see more of:

“51.9% of the (voting) population wanted to leave the EU. 48.1% of the (voting) population wanted to stay. Regardless of your political beliefs, ladies and gentlemen, it’s called democracy. Millions of people died so we can enjoy such freedom. This isn’t football, rugby, darts or stamp collecting. This is your country, don’t turn your back on it because the result didn’t go your way. Take a knee, drink water.”

The argument

What I see as I browse twitter is single viewpoints from people. “The UK is anti immigration”, and somehow that’s what people thought the whole thing was about? The UK is the biggest melting pot in the world. I think if you go to London and spend a day walking around, you will see how welcoming we have been to other countries. I don’t expect the British attitude of caring for others to change. Their may be a nationalist undertone as in many countries today but that does not mean that’s the viewpoint of many of the people who voted leave.

For me there are fundamental problems with the EU. I would rather have stayed and worked them out, but it’s clear that path is unlikely.

The only reason I would vote leave is for one major point:

To restore powers back to the UK parliament

EU law has supremacy over UK law. We as a people did not elect the European Commision or the EU president for that matter. Yes we have MEPs but this does not go far enough. UK VETOs to laws get overruled by EU laws. We cannot govern ourselves as a people and when you lose the ability to remove people from government as a people you have a problem. If we want closer Europe, then let’s have an EU that supports that. Maybe every EU Citizen has a vote for the EU president and people in the European commission? I feel as if the EU is built on protectionism and by nature has become undemocratic.

But why would I want to stay?

I wish we could find a different solution. There is something to be said for EU integration. I’ve always loved the idea of the EU from when I was in high school. Single trade, freedom of movement. It all sounds great. I can handle the extra money we give the EU given the UK GDP and unemployment rates being lower than most of Europe, we should help our EU neighbors.

Quite frankly though, I have loved going to Europe many times. I’ve enjoyed the Euro. I’ve enjoyed the idea of being able to one day work there if I wanted to with absolutely no issue. I can see the benefits.

Taking an even further step back. I miss Europe, and not just the UK. I’ve lived in America for some time now, I love it here as well, I love my friends here, and today I feel very far away from what is going on overseas. I have many European friends, saddened by the events of today, because it feels like a breakup. Except the kind where we are still living together and half of you doesn’t want to break up at all. You’ve spent years together working to be closer.

The other reasons for leaving..

1.Immigration

There are many other arguments being put forward and I’ll give my viewpoint on immigration.

I am all for immigration, but I do believe there is a sustainable amount

Immigration is beneficial. I believe in helping out others, but I also believe like a company, if you bring in too many people at once, you lose the very reason they are coming there in the first place. We are a successful country, and people want to come to the UK. In the age we are in today the UK has low unemployment compared to other countries and if we can offer people work we should.

If you take the size down for a moment and just look at a company. I can even use my company as an example. We have a culture, one we all love. We look out for each other and work well together. If too many people suddenly joined, our culture could change. We have to hire fast today, but we do a good job of integrating people. However there is a limit to that. I’d say we’ve even flirted with that limit before.

Now a country is far more complicated, especially one like the UK where we have what I still view as a fantastic health service. This in itself is an appeal for many who can’t get healthcare easily. If too many people immigrate into the UK we may not be able to sustain those services, amongst many other.

2. National Security

This one is questionable. I still believe working with our European partners we are stronger. Isolating ourselves to an island is a problem. Terrorism is a global problem and I don’t believe Brexit truly in the long term makes us safer here. Others may disagree.

3. Economy

Whether we are better off or not from an economic standpoint is hard to say. Yes we give £19 billion a year to the EU. We get a rebate of £5 billion and receive EU payments back for around $4 billion. so in effect we give £10 billion to the EU. As a % of our GDP I don’t think it’s that unreasonable given the European goals and I could probably live with this, if it meant a better Europe. If we just look at the EU economy as a whole, it’s really not been doing as well as other countries so the argument for tying ourselves to it is sometimes hard to argue for.

Either way, if we’re in it as a team, I don’t see this as the major reason for leaving.

In closing…

First of all, I certainly believe the UK isn’t moving into a nationalist type country. I’m not a fan of Nigel Farage and UKIP. He had some good arguments in the debate but the way he goes about politics is a problem in my opinion. Personal attacks are “Trump like” and I can’t vote for people that quite simply show no respect for others even if they disagree with them. Example: https://www.youtube.com/watch?v=ViPm0GUxw-M

There are other reasons in the leave camp as well. I wish we could have found a new solution rather than leave it. Isolationism is scary at best and I hope as the dust settles the UK can become an enthusiastic nation that competes on the international stage while continuing to embrace our neighbours. I believe we have a great culture. I love going to the Lake District, Scotland, London, and most of all I miss Cambridge. I miss the many British people I’ve met during my life there and know that we are a good, caring nation. I hope out of this a new emergence of ideas begin to form on ways to make the country great. That may be false thinking, but in the end I am glad that we will start to be able to govern ourselves more directly, rather than a central body in Brussels.

Saying that, I can’t help but feel extremely sad, that we lost something. I just hope we can overcome it and move forward, not backward. Let’s continue to holiday in Spain, take the Eurostar to Paris and overcome and strange feelings we might have as we begin to part ways.

I will miss the EU stars on the UK car license plates. We may not be in the EU, but I hope we can be a united Europe.

What I wish for…a reform of the EU and a way to come back together before it’s too late. On the plus side, as my cousin said. I’m thankful we live in a democracy where we can even have a referendum.

References:

I wanted to leave some items here. I spent days reading and watching youtube videos but I highly recommend people start reading and watching the debates before making judgements online.

Remain vs Leave Debate: https://www.youtube.com/watch?v=uYTJGBBjkGo – I honestly did not think the remain campaign did a good job here. Maybe if they had better people here this could have gone different.

Research from my Uncle on why he’s voting Brexit: Why vote Brexit – I suggest reading this. He’s done a ton of work and research in this area.

PM David Cameron interviewed on Remain: https://www.youtube.com/watch?v=HO6MZcOQH0g

Google around, read the EU web site. Learn how the EU works. I read and watched so many other pieces on this.